When Learning Was Harder (and Therefore More Valuable)

Over the past few months, I’ve often found myself interviewing very young candidates, involved in an internal academy my company is currently building.

It’s a stimulating experience, but every time it inevitably leads me to reflect on how much the way people enter the IT world has changed.

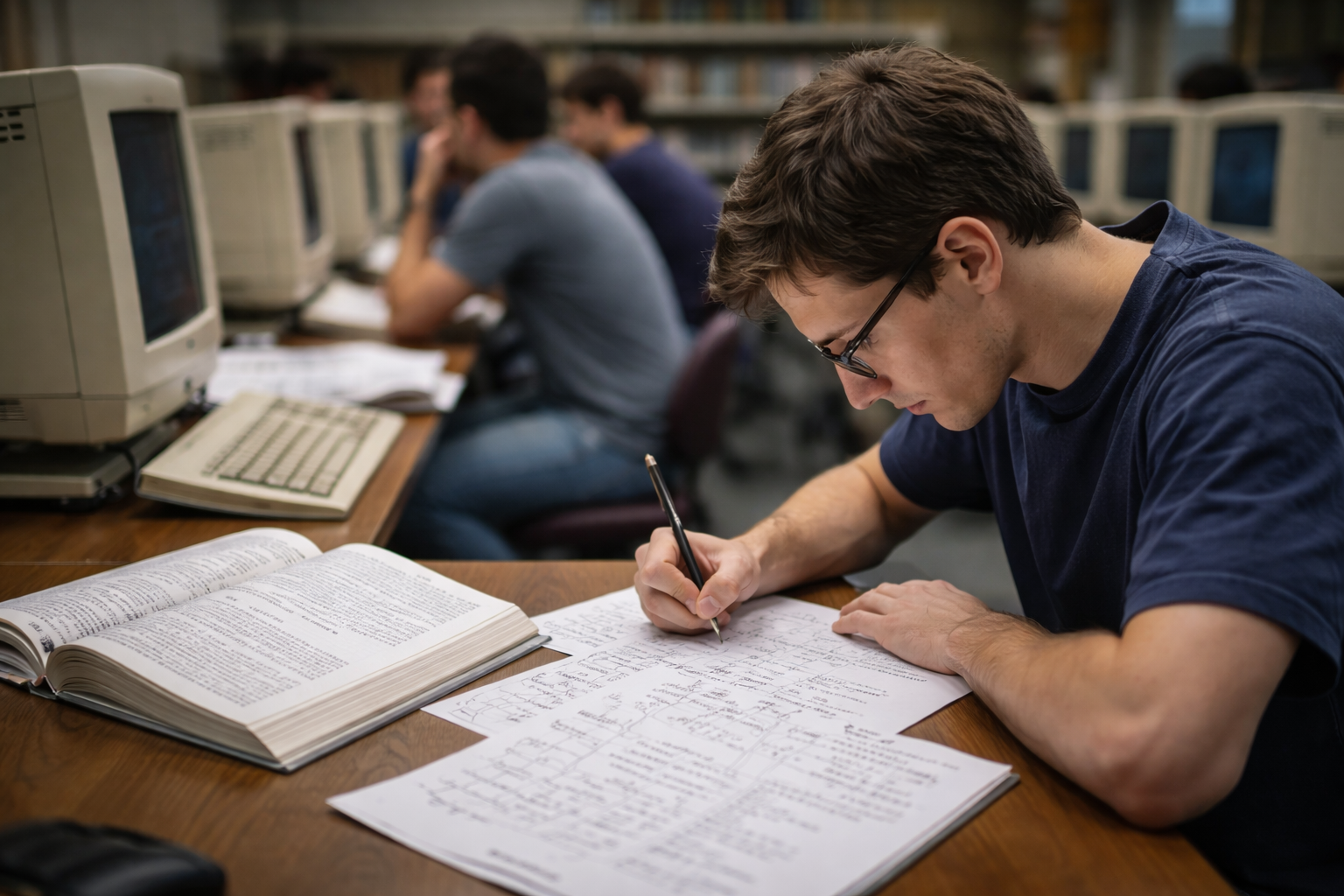

I think back to my early university years, when working on a Linux machine literally meant lining up in a lab. There were only a few machines available, often a shared Pentium II, and the time you had was limited. That “dead” time, spent waiting for a terminal, wasn’t really dead at all: you would take out a sheet of paper and start thinking.

You wrote the program by hand, reasoning about procedures, inputs, memory usage. You arrived at the lab with the code already thought through—sometimes fully written on paper, other times prepared in a text editor and copied onto a floppy disk—and only then did you translate it into C, using vi, malloc, realloc, and manual memory management.

Allocations that looked perfect on paper would then blow up into a segmentation fault.

And how did you deal with that?

There was no tool you could immediately ask what to do. You went back to the books, reread Kernighan & Ritchie, redesigned the program flow over and over again, discussed it with friends and colleagues, trying to truly understand what was going on.

Looking back, no training ground was more formative than that.

Not having all the information at your fingertips—having very little of it—forced us to develop critical thinking and to deeply understand systems, languages, and architectures even before writing the first line of code.

I clearly remember that my first Java exam was a written one: all the code on paper. I hated it at the time. At that age, you want to write the best code in the world—you imagine yourself with headphones on, typing at lightning speed.

But without that process, I would never have internalized the fundamental concepts of object-oriented programming. Without realizing it, I was learning how to drive the tool, rather than being driven by it.

I spent entire days on complex recursion, optimization problems, dynamic programming, red-black trees, balanced data structures—trying to understand the trade-offs, the decisions, the “why”. Think first, then execute.

I was also fortunate enough to encounter extraordinary professors (in those years, the University of Naples Federico II had many of them), who didn’t teach hype—there was plenty of it even back then—but taught a deep understanding of systems. Looking back today, I feel lucky.

At this point, it’s inevitable to reflect on those who are entering the IT world today, in an era where generative AI drives many decisions and everything seems immediately available.

Perhaps real skills are not acquired only in the final result, but in the path that leads to it. If you lose that path, what do you really take home? Just the software that was produced?

It’s true: today, anyone who wants to dive deeper into a topic no longer needs to pore over manuals for days. They can use ChatGPT, Claude, Copilot. They can write code faster, get immediate examples, explore solutions in minutes.

But this often pushes people toward the easiest path: getting the result while skipping the reasoning that should come before it.

And that’s where the risk lies.

These tools are extraordinary and should be used. They improve productivity and open up enormous possibilities. But they must be used to learn, not just to deliver faster.

Just like at university, when we used to read the code of a more skilled colleague, or try to “steal” an architectural pattern from a professor to see if we could fit it into our own systems.

Always learning.

Because in the end, the only thing that truly matters is competence.

And competence is not measured by the number of lines of code written, but by understanding systems, by the ability to identify a problem and connect the dots (as people like to say nowadays).

But behind that “connecting the dots” there must be a deep knowledge of languages, architectures, and systems.

Those starting today should use generative AI precisely for this purpose: to build a deeper growth path, not a more superficial one.

Use it to understand, to explore, to challenge your own solutions. Not just to obtain an output.

The code will belong to the client. It will become obsolete and, over time, it will be decommissioned—but that is not the only deliverable.

The real deliverable is the journey that leads us to build something, the set of skills that remains and that we carry with us throughout our careers.

In an era like this, competence does not lose value—it becomes fundamental.

Not because generative AI is useless, but because not everything can be generated, not everything can be delegated, and above all, not everything can be automatically understood.

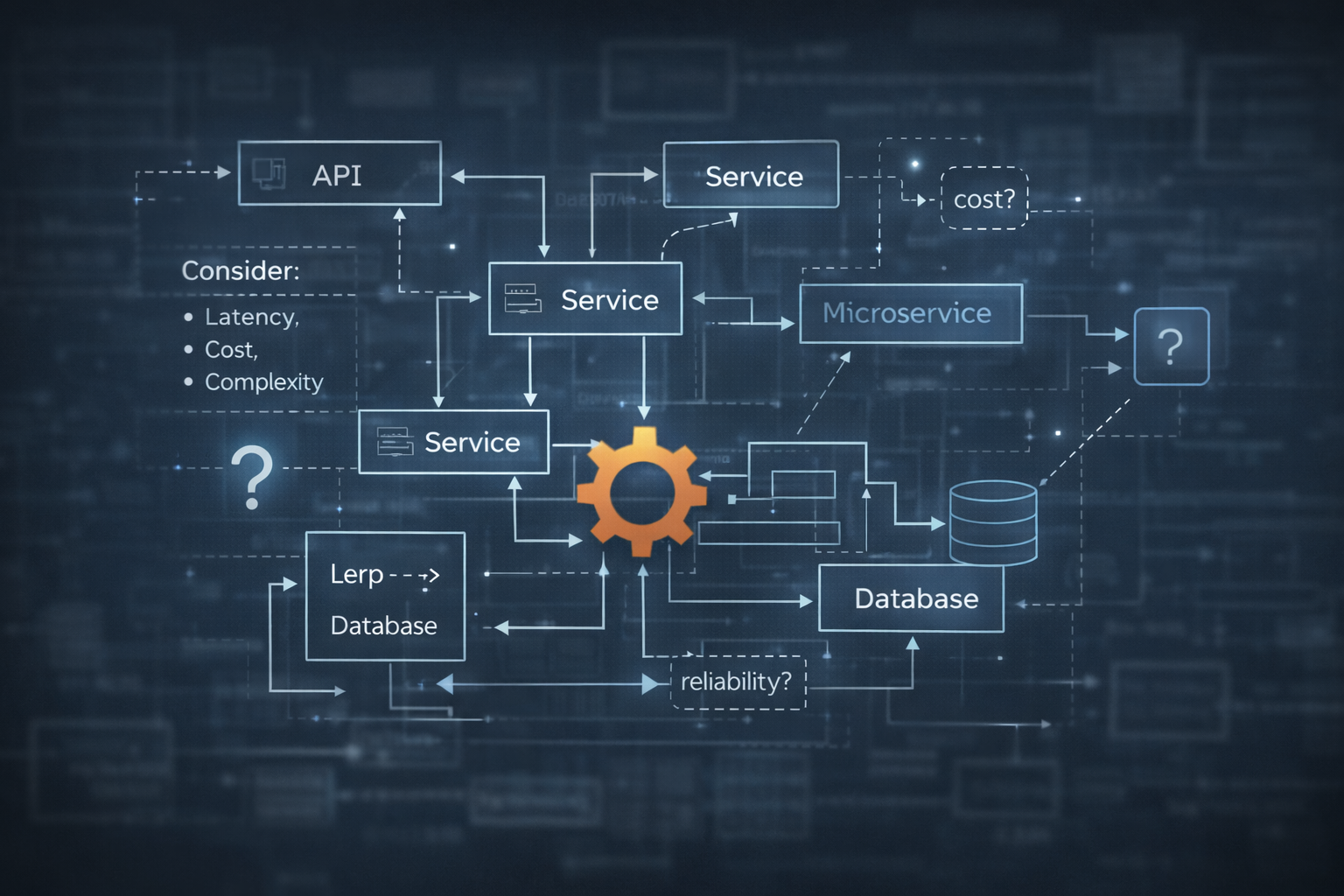

Today, access to these tools seems cheap, immediate, almost unlimited. But even now, if you look closely at the nuances, different signals are already emerging: complex pricing models, costs that are hard to estimate upfront, non-intuitive metrics, bundles that hide real consumption.

It’s not always clear how much we are paying, for what, and under what conditions those costs will remain stable over time. This opacity is not accidental—it’s a pattern we’ve seen every time a technology becomes critical infrastructure.

It’s therefore legitimate to ask whether this phase of apparent accessibility also serves to make generative AI pervasive and indispensable, creating a dependency that will be hard to unwind in the future.

The history of cloud computing has taught us that lock-in rarely arrives as an explicit choice. More often, it’s the result of many small decisions, made when the cost seems negligible and the convenience too high to give up.

That’s precisely why it becomes essential not to lose autonomy. Knowing how to use generative AI is now a necessary skill—but not a sufficient one. The real difference will be made by those who know when to use it, where to use it, and when to step away from it, while maintaining the ability to build, modify, and understand systems even without it.

AI can suggest a solution, accelerate an implementation, help explore a problem. But it cannot take responsibility for trade-offs, real costs, architectural consequences, nor can it guarantee that a choice will remain valid over time.

When efficiency becomes a necessity rather than an option, when costs start to matter for real and not just on paper after the fact, we will need people who know what to turn off, what to simplify, what to rebuild.

Code can be generated, rewritten, optimized, and eventually decommissioned. But understanding systems, architectures, costs, and failure modes is not generable. It is the result of a journey, of conscious decisions, of mistakes faced and understood. And it is precisely this body of knowledge that allows us to avoid unnecessary lock-in, preserve technological freedom, and adapt when the context changes.

For those entering this world today, the message is not “don’t use generative AI”, but “use it in the right way”: not to trivialize a task, not to skip reasoning, but to expand your skills.

Tools must be mastered, studied, and exploited to their fullest—but at the same time, we must build the foundations to avoid being mastered by them.

Because the real risk is not using AI, but forgetting how to work without it.

Those who believe AI solves everything, does everything, and replaces thinking are living on hype. We are not. We are engineers—and engineers don’t sell smoke.