Connecting Kubernetes Clusters Intelligently with Cilium Cluster Mesh

Today, modern Kubernetes architectures no longer stop at a single cluster. Instead, they often adopt multiple clusters to address different needs, such as:

- geographic distribution

- high availability

- environment separation (dev, staging, production)

- team isolation

- management of very large platforms

However, as soon as we move to a multi-cluster architecture, a new challenge immediately appears.

How can we allow applications distributed across different clusters to communicate as if they were part of the same system?

Pods need to be able to communicate with each other.

Services need to be globally discoverable.

Traffic should be able to be balanced across multiple clusters.

And security policies must continue to work consistently.

This is exactly the problem that Cilium Cluster Mesh aims to solve.

The Kubernetes Multi-Cluster Problem

A single Kubernetes cluster provides many powerful capabilities:

- service discovery

- pod-to-pod networking

- load balancing

- network policies

The problem is that all these features are limited to a single cluster.

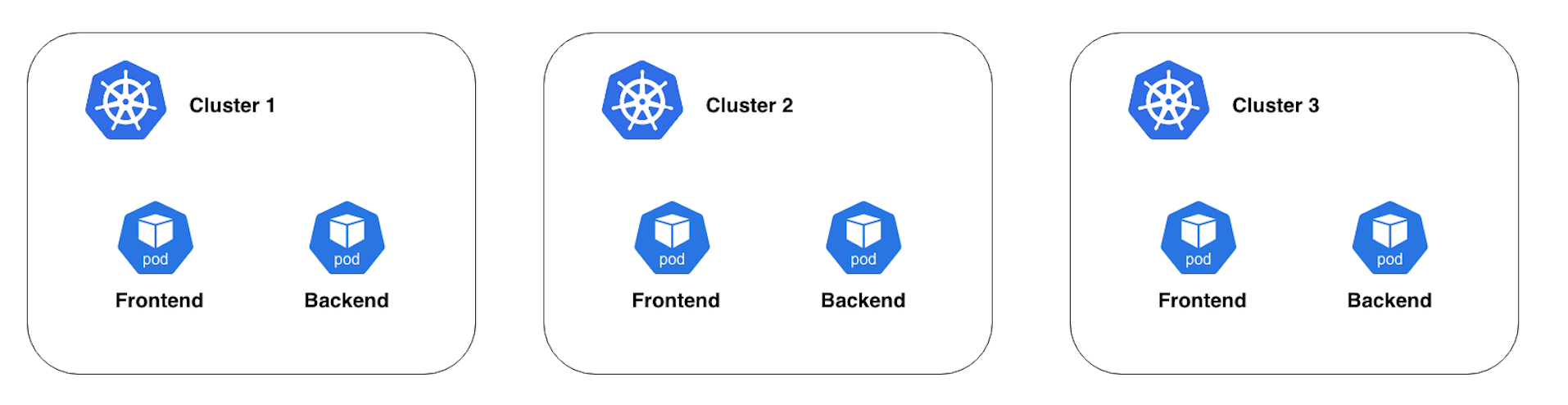

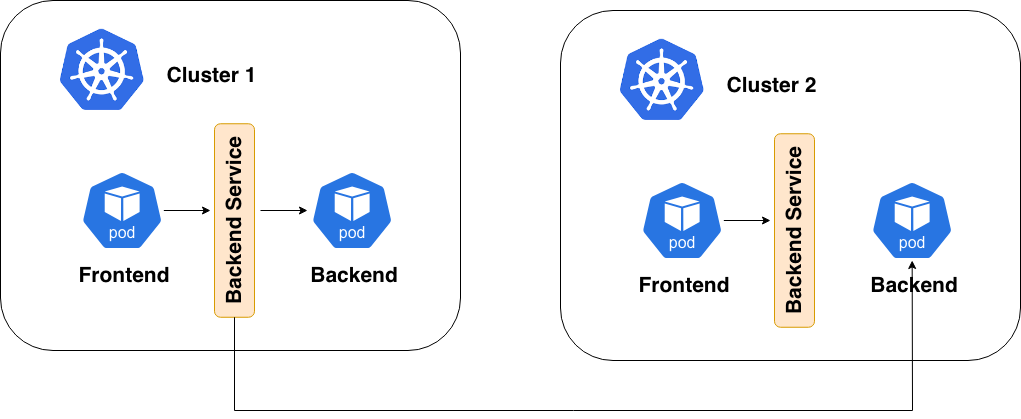

If we deploy the same application across three clusters, the situation looks like this:

Each cluster operates independently.

A frontend running in cluster A cannot automatically discover or reach a backend running in cluster B or C.

Services, as well as policies, are local, and the network is local too.

In other words, each cluster becomes its own networking island.

This makes it significantly more complex to build:

- multi-region services

- systems with cross-cluster failover

- large-scale distributed applications

And this is where Cluster Mesh comes into play.

A Quick Introduction: What Is Cilium?

Before diving into Cluster Mesh, it’s worth taking a step back and briefly introducing Cilium.

In Kubernetes, networking between pods is not managed directly by the core system. Instead, it is delegated to external components called CNI plugins (Container Network Interface). These plugins are responsible for providing fundamental networking capabilities such as:

- pod-to-pod communication

- traffic routing

- IP address management

- enforcement of network policies

Over the years, several widely used CNI solutions have emerged, including Flannel, Calico, Weave, and Canal.

Cilium is one of the most modern and innovative CNIs in this ecosystem. Unlike many traditional solutions that rely heavily on iptables, Cilium uses eBPF, a Linux kernel technology that enables networking, security, and observability to be implemented in a far more efficient and flexible way.

Thanks to this approach, Cilium does much more than simply provide networking between pods. It introduces advanced capabilities such as:

- identity-based workload security

- advanced network observability

- service mesh integration

- multi-cluster networking

In recent years, Cilium has become increasingly popular in the Kubernetes ecosystem and is now widely used in many cloud platforms and production environments.

It is a very rich and interesting tool that certainly deserves a deeper exploration (perhaps in a dedicated article). In this article, however, we will focus on one of its most powerful features: Cluster Mesh.

What Is Cilium Cluster Mesh?

Cluster Mesh is a Cilium feature that allows multiple Kubernetes clusters to be connected, creating a single logical multi-cluster network.

The core idea is quite simple:

- clusters remain independent

- but workloads can discover and communicate with each other

From the perspective of applications, pods distributed across different clusters can behave as if they were running in the same networking environment.

What Cluster Mesh Actually Enables

Communication Between Pods Across Different Clusters

With Cluster Mesh, pods can communicate even when they are running in different clusters. A pod in cluster A can directly reach a pod in cluster B.

This works because clusters share information about:

- pod endpoints

- nodes

- available services

From the application’s perspective, the infrastructure simply expands, rather than becoming more complex.

Multi-Cluster Service Discovery

Cluster Mesh allows clusters to share information about services.

Each cluster can know:

- which services exist in other clusters

- which endpoints make up those services

- where they are located in the network

This makes it possible to implement distributed service discovery across clusters.

Global Services and Cross-Cluster Load Balancing

One of the most interesting features enabled by Cluster Mesh is the ability to distribute traffic across multiple clusters.

To understand how this works, we first need to introduce the concept of a Global Service.

In Kubernetes, a Service is normally limited to the cluster in which it is defined. This means that traffic is load balanced only to pods running within the same cluster.

Cilium introduces the concept of a Global Service: a Service that can aggregate endpoints coming from multiple clusters within the mesh.

When a Service is configured as global, each cluster can see not only the local backends, but also those running in other clusters.

Cross-Cluster Load Balancing

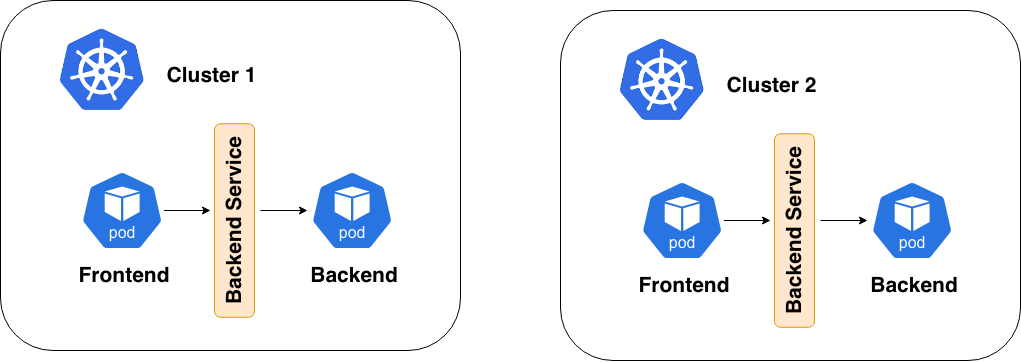

Let’s imagine a service with backends distributed across two clusters.

Without Cluster Mesh:

Each cluster uses only its own backends.

With Cluster Mesh and Global Services, however:

The service can distribute traffic to backends running in different clusters.

Requirements for Connecting Multiple Clusters

To create a Cluster Mesh, a few prerequisites must be met.

Non-Overlapping Pod CIDRs

Each cluster must use different IP ranges for pods.

For example:

- Cluster 1 →

11.0.0.0/8 - Cluster 2 →

12.0.0.0/8

This is necessary to avoid routing conflicts.

Connectivity Between Nodes

Nodes from different clusters must be able to reach each other over the network.

Cluster Mesh does not create the underlying connectivity; it relies on existing network connectivity.

Unique Cluster Identity

Each cluster must have:

- a cluster name

- a cluster ID

This allows Cilium components to identify the origin of the traffic.

How Clusters Share Information

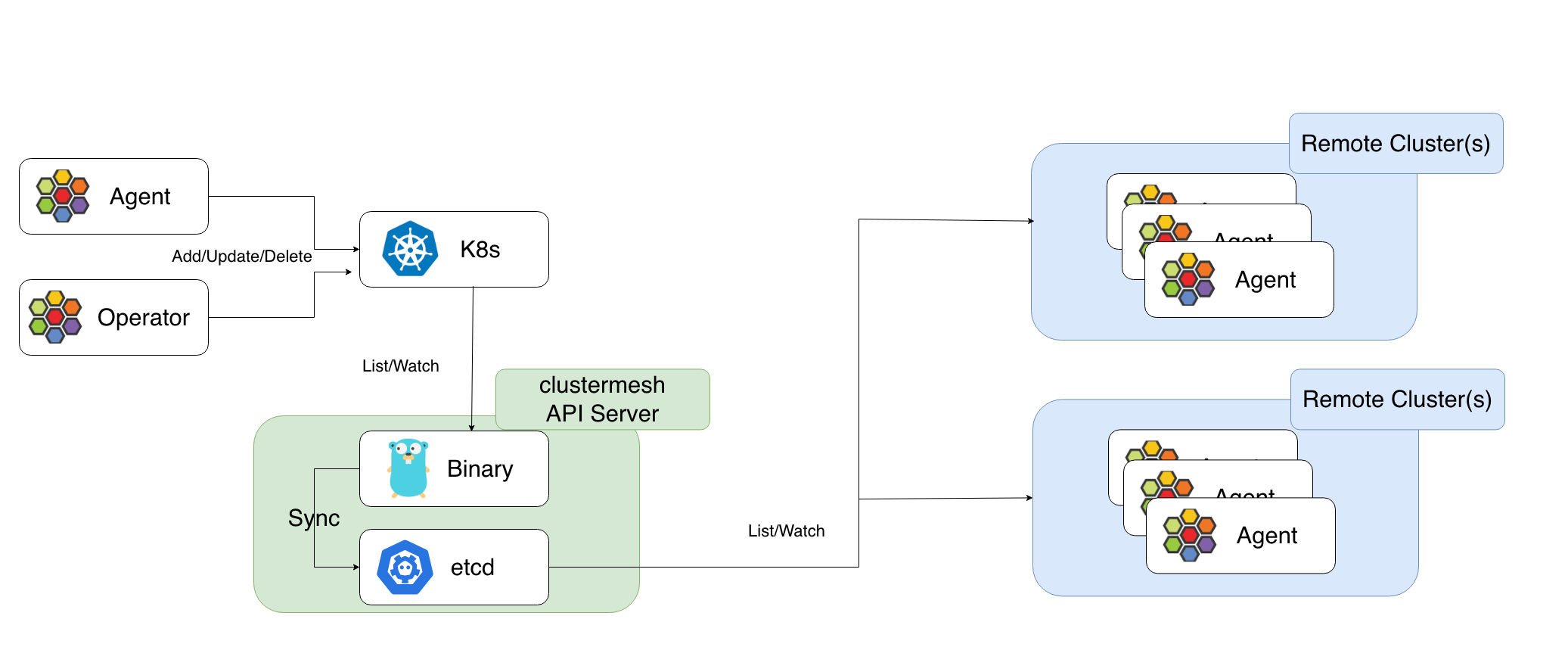

When Cluster Mesh is enabled, Cilium creates a component called the Cluster Mesh API Server.

This component observes the state of the cluster and collects information about:

- services

- endpoints

- nodes

- workload identities

This information is then shared with the other clusters in the mesh.

Thanks to this mechanism, each cluster can have visibility into the other clusters.

The Scalability Problem of the Initial Model

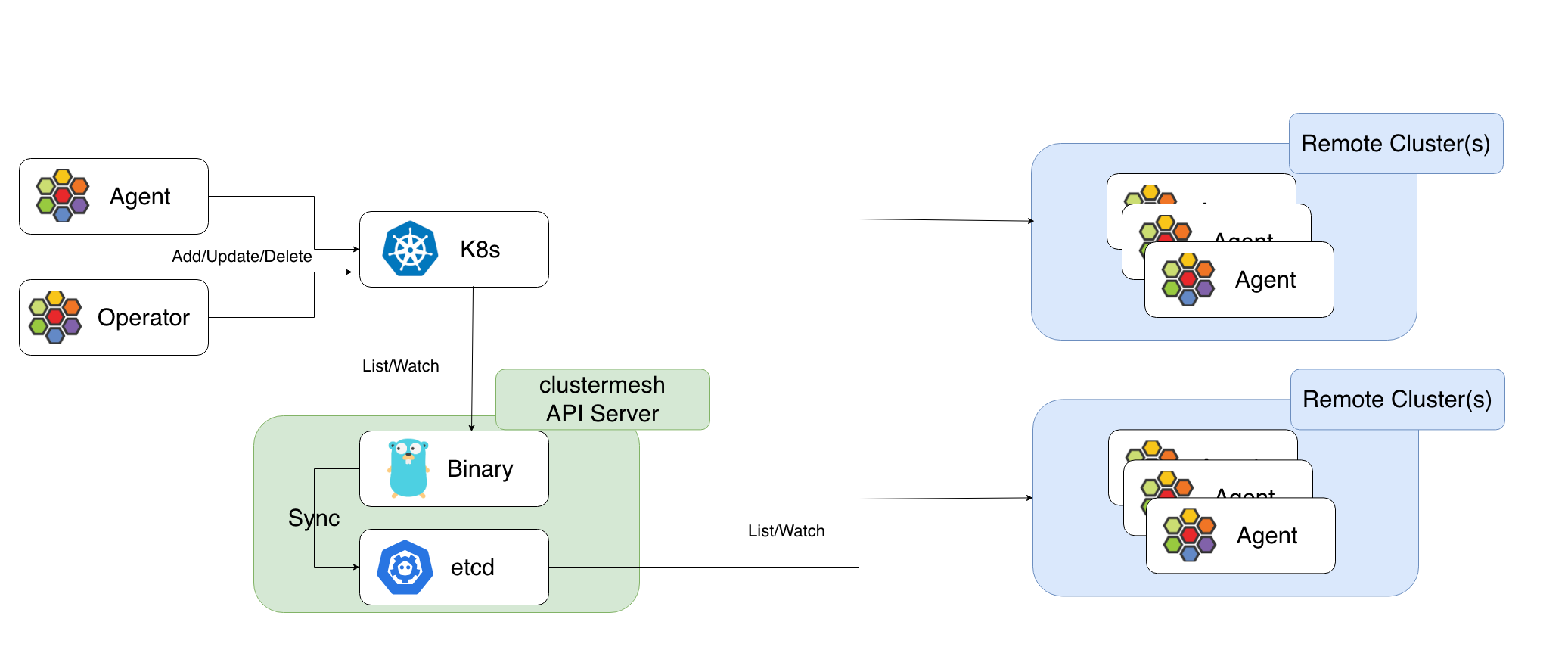

The first Cluster Mesh model worked well in small environments.

However, as the number of clusters and nodes increased, a problem began to emerge.

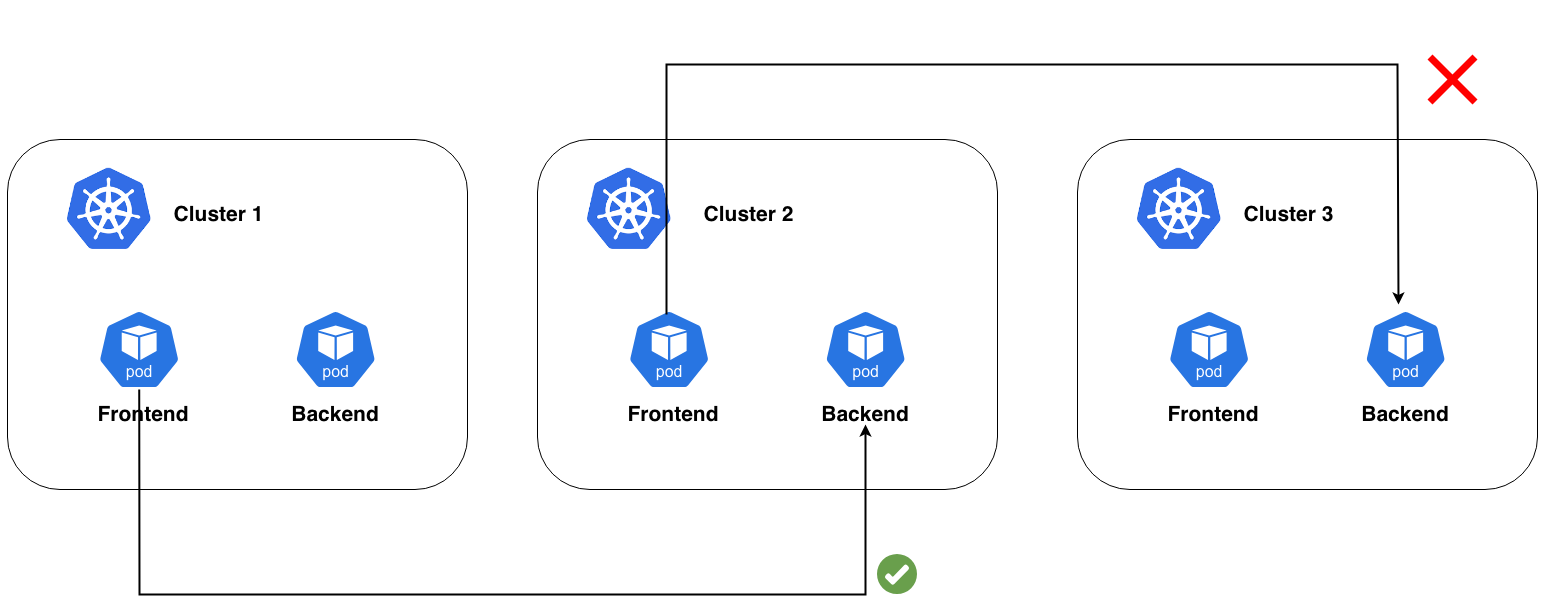

Let’s imagine the following scenario:

- 3 clusters

- 100 nodes per cluster

- 1 Cilium agent per node

This means we have 300 agents.

In the original model, each agent had to synchronize with the other clusters to obtain information about services, endpoints, and identities.

This generated a large number of synchronization connections, leading to issues such as:

- increased load on etcd

- higher latency

- scalability limitations

A different approach was needed.

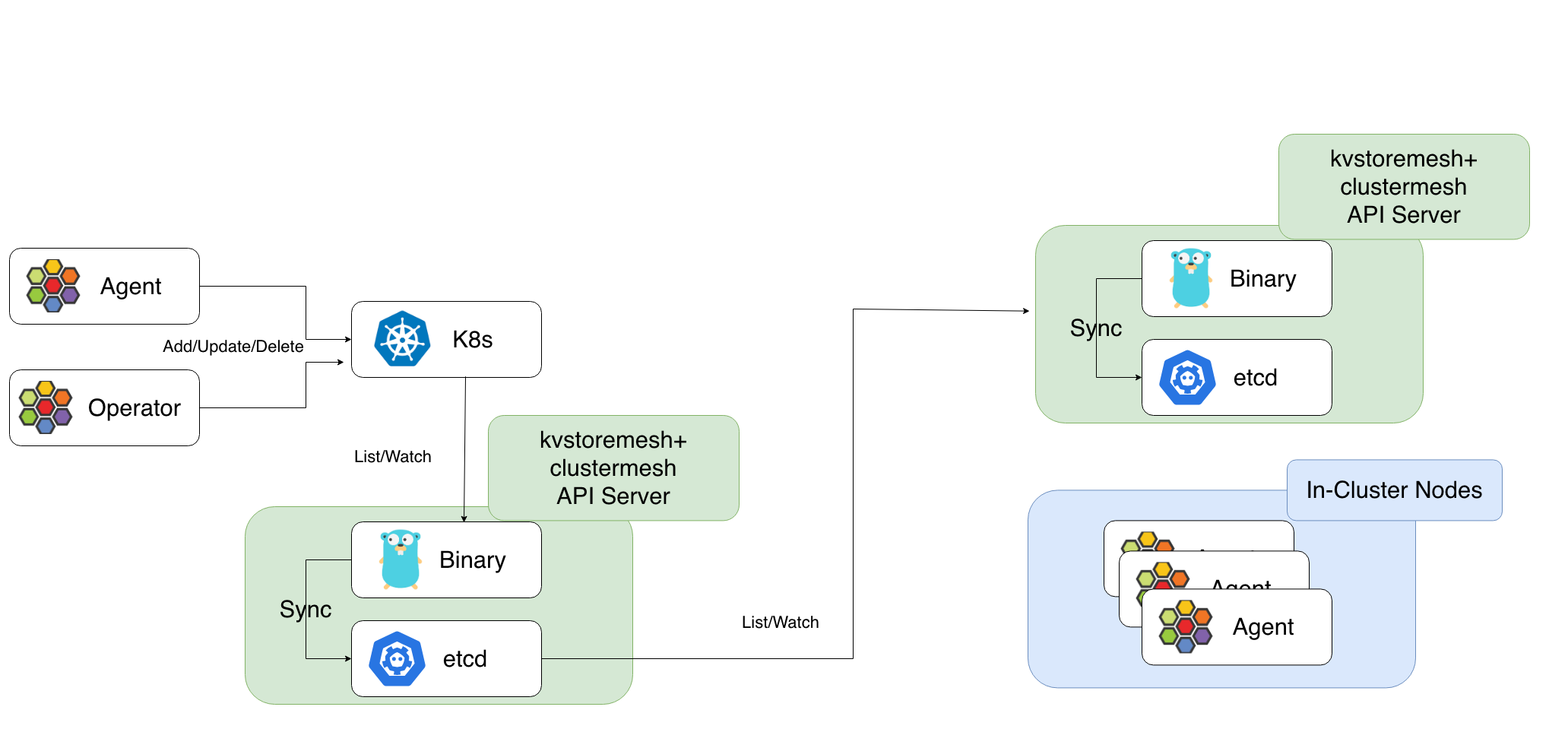

The Solution: KV Store Mesh

To solve this problem, KV Store Mesh was introduced. The idea is to move the synchronization process from individual agents to the clusters themselves.

Before:

After:

agent → local cluster

Agents communicate only with their local datastore, while synchronization happens between the clusters’ datastores.

This drastically reduces:

- the number of connections

- synchronization traffic

- the load on etcd

The result is a much more scalable architecture.

Cross-Cluster Security

In a multi-cluster environment, it’s essential that security policies can distinguish the origin of traffic across different clusters.

Cilium’s networking system makes it possible to enforce cluster-aware network policies by using the label:

io.cilium.k8s.policy.cluster

This label identifies which cluster the traffic comes from and can be used inside CiliumNetworkPolicy resources to control communication between workloads distributed across different clusters.

An important aspect of policies in Cluster Mesh is that they are not global:

a policy applies only to the cluster where it is created. If you want to enforce the same rule across multiple clusters, you must distribute the policy manually to each cluster.

Example 1 – Allow Traffic Only from the Frontend

In the following example, we define a policy that allows traffic to the backend only from pods with the label app=frontend.

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: allow-frontend

spec:

endpointSelector:

matchLabels:

app: my-app

ingress:

- fromEndpoints:

- matchLabels:

app: frontend

With this configuration:

- frontend pods can communicate with the backend

- all other pods are automatically blocked

This behavior comes from Cilium’s default-deny model: when a policy selects an endpoint, all traffic that is not explicitly allowed is denied.

Example 2 – Allow Traffic Only from the Frontend of a Specific Cluster

You can make the policy even more restrictive by also filtering based on the origin cluster of the traffic.

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: allow-frontend-from-cluster-one

spec:

endpointSelector:

matchLabels:

app: my-app

ingress:

- fromEndpoints:

- matchLabels:

app: frontend

io.cilium.k8s.policy.cluster: cluster-one

With this configuration:

- the frontend in

cluster-onecan communicate with the backend - frontends running in other clusters are blocked

This enables granular security controls in multi-cluster scenarios.

Enforcing Cross-Cluster Security

Using the label io.cilium.k8s.policy.cluster makes it possible to define policies that take into account the origin cluster of the traffic.

This enables you to:

- limit which clusters can communicate with a given service

- isolate different environments (for example, production and staging)

- implement zero-trust security models even in multi-cluster architectures

Why Cluster Mesh Matters

Cluster Mesh is not just a networking feature.

It’s a real architectural enabler.

It makes it possible to build distributed Kubernetes platforms where:

- applications can scale across multiple clusters

- traffic can be distributed across regions

- failover can happen automatically

- security remains consistent

All while keeping clusters operationally independent.

Conclusion

As Kubernetes platforms continue to grow, multi-cluster architectures are becoming increasingly common.

Cilium Cluster Mesh offers an elegant way to connect these clusters while maintaining consistent networking across distributed workloads.

Thanks to features such as Global Services and KV Store Mesh, multiple Kubernetes clusters can be treated as a single distributed logical environment.

And that’s exactly the kind of infrastructure modern cloud-native platforms increasingly need.